Surprise – This Robotics Expert Doesn’t Think AI Will Destroy the World

Why robotics pioneer Benjie Holson believes AI art tools need more “common sense.”

Can you tell us who you are and how you got involved with generative AI?

Benjie Holson: My project Dimension Hopper is my first real foray into AI art, but I’ve done a bunch of related things.

I’m a roboticist. I’ve worked at a robotics startup (Anybots), Facebook and most recently Google X’s Everyday Robots. But I’ve also always had an artistic side. Growing up my parents were traveling puppeteers and I grew up performing with them. I’ve always used art and illustration to help communicate at work, both my own internal memos and for things like the Mission and Values for Everyday Robots. I wrote more about my AI assisted manual illustration process here.

I love playing with art where you have partial or indirect control. As a little kid I loved playing with the filters built into Kid Pix, and as a middle schooler, writing algorithmic art using QBASIC. (Dating myself here). AI art has been super interesting to me to read and watch, but only crossed the threshold of something I really wanted to play with once img2img and especially once ControlNet became available.

With an impressive background in robotics, how do your skills and knowledge translate to the world of Generative AI?

I’d say that one of my superpowers is looking at tools and seeing interesting ways to use them. I’m usually not the one to create the tool, but I’m often able to use them to do things that the creator of the tool didn’t image. That skill is made of two parts.

The first is noticing patterns between things and asking the question, “I wonder if I could make X do Y?” The trick here is to know the tools and the problems well enough so that your brain can make those connections. For tools, the best way to get to know them is to “play”.

At Everyday Robots I organized a weekly one-day hackathon for my team where we gave ourselves permission to “play” with the robots and do some things that felts silly and unimportant, but were super critical for us to get to intimately know the ins and outs of the tools and APIs we had.

For tools, the best way to get to know them is to “play”.

The second is to work steadily towards the connection you want. With new things (like AI for robotics or generative AI) this is a ton of trial and error. You need to be able to see small improvements and explore near that area, but also know when it’s time to take a step back and try a different approach. For Dimension Hopper it was probably 20 hours between “I bet I can get 2D levels for a platform game using ControlNet” and the final product built into the game. I wrote a bunch about that process here.

The other similarity is both have a pretty easy path to “works 80% of the time”. Thats why so many AI assisted workflows involve generating many more images than you need and carefully curating them. However, if you want to integrate it into a product or game, it needs to work well all the time. That’s a lot harder, but is a familiar struggle from robotics where we talk about completing the first 80% of the work, and then the second 80%.

In this series, we meet interesting founders and creators from the AI scene and discuss with them not only their take on this new era of technology, but maybe learn a few secret tricks from them. If you’d like to share your story and tips, you can get in touch with us here.

You wrote about general purpose robots, do you think they can be combined with AGI (Artificial General Intelligence)? Would that be a good thing or not?

I don’t think there is a singular “General Intelligence” (artificial or otherwise). Even humans have many different kinds of intelligence working together. I think it extremely unlikely that we will ever have something that has the same level of intelligence as a human in all areas (for example we are super super specialized in reading each other’s emotional state but pretty bad at adding large numbers.) As we improve different aspects of computer intelligence many of those will be useful to robots.

I think we will make sure to build tools that work with and for us.

One thing that has historically been really hard for robots is ‘common sense’. Programming robots for human environments is hard because there are basically infinite things that could happen and if you had to list them out and say what a robot should do about each of them you’d never finish. As I’ve been playing with large language models, they seem to have quite a bit of common sense ingrained in them which is pretty exciting, as it feels like a second piece of the puzzle of making robots work around people.

I’m (obviously) a robot enthusiast and optimist. Working close to the technology I’m not particularly worried about an ‘evil robot’ uprising: I think we will make sure to build tools that work with and for us. And I think that automating drudgery work is good for humanity in the medium and long term.

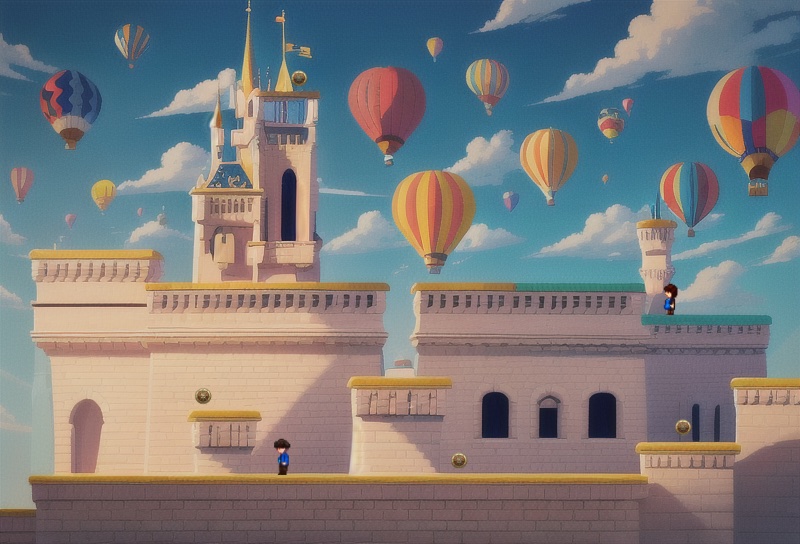

You are an indie game developer, I think, and your game “Dimension Hopper” looks really promising. Of course it looks like a simple platformer from the outside, but actually all the levels are generated by AI, players can generate more levels on the fly and customise them as well, and can even generate new characters! It really seems like an amazing proof of concept for what the future of platformers could be powered by new AI and ML technology. Can you tell us a bit more about what’s behind this project and what you’d like to achieve in the future?

I’ve always loved games that came with level editors. A big part of the project was to see if I could use Stable Diffusion to create a level editor that let anyone make beautifully illustrated levels. But I also wanted to create a game that I’d want to play. A limit of Dimension Hopper is that it really only shows one bit of information per square (wall/not-wall).

I’ve been working on getting interactive objects into the game to make a puzzle platformer, but still one that has a level-editor that lets anyone create and publish beautifully illustrated puzzles, but there are a ton of technical challenges in getting objects incorporated into the art where I need them.

What do you think is the next big thing that is going to happen in the next couple of weeks/months, ai-tech related?

It is shocking that Google/Amazon voice-assistants are not yet powered by transformer based LLMs. That has to be coming in the next few months. Beyond that, my prediction for the next big thing is LLMs that are able to create pull-requests for software projects based on prompts, rather than only being able to generate new text or rewrite small portions.

It is shocking that Google/Amazon voice-assistants are not yet powered by transformer based LLMs.

Once that exists and can be incorporated into a unit testing framework, software engineering is going to look pretty different and be a lot more productive. I recently wrote a bunch about my experience using text-based AI to help program my game here.

With your experience using the tools, you probably discovered a couple of tips and tricks. Which ones would you be ok to share with our audience?

I think a lot of getting to success is patience. An interesting challenge in getting the level generation is that the fidelity of the depth-based Control-Net is very resolution dependent. Right now I’m trying to get spikes to render in my game and finding that I can’t get it to follow the depth hint at the size that I want, but if I 4x the resolution, render the spikes as an inpainting on a crop of that area and then shrink them in again I can get it to work.

That trick seems to work for other things too.

I’m also finding it’s much easier to inpaint objects away than to inpaint them in, so for objects that the user can toggle (like a door, for example) I’m having better luck generating the image with them in it and then creating an inpainted patch to remove them when the door is open, for example.

Lastly, I’ve been pretty happy using control-net’s preprocessors for other things. In the next version of DimensionHopper there are trees and vegetation that I want the character to walk behind, visually, and I know where they are going to be but not their exact segmentation because the AI takes some liberties with the depth hint.

I’m using the depth-estimator preprocessor from the depth mode of control-net on the finished image to create a mask for the foliage in the foreground that I can draw on top of the player character to make them part of the background image, and it’s working pretty nicely, though I have to do the same upscale trick to get the detail I need for the mask.

Where can people find out more about you?

The best place to find out more is to read my Substack.

Post a comment